Academia job rumor milll Updated 2025-07-16

Academic journal Updated 2025-07-16

Academic paper Updated 2025-07-16

Absorbing Markov chain Updated 2025-07-16

Academic publisher Updated 2025-07-16

Academic publishing is broken Updated 2025-07-16

- experimentalhistory.substack.com/p/the-rise-and-fall-of-peer-review The rise and fall of peer review by Adam Mastroianni (2022)

One of the most beautiful things is how they paywall even public domain works. E.g. here: www.nature.com/articles/119558a0 was published in 1927, and is therefore in the public domain as of 2023. But it is of course just paywalled as usual throughout 2023. There is zero incentive for them to open anything up.

Academic term Updated 2025-07-16

A Chinese Ghost Story Updated 2025-07-16

A Chinese Ghost Story sutra Updated 2025-07-16

Appears to be a small section from the Diamond Sutra. TODO find or create a video of it, it is just too awesome.

ACID (database) Updated 2025-07-16

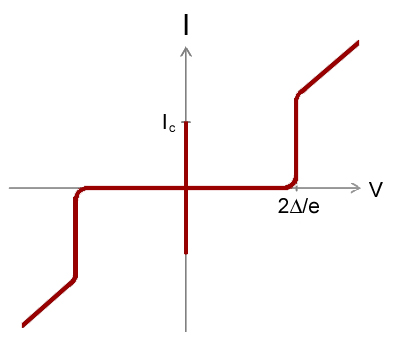

AC Josephson effect Updated 2025-07-16

It is called "AC effect" because when we apply a DC voltage, it produces an alternating current on the device.

By looking at the Josephson equations, we see that a positive constant, then just increases linearly without bound.

Wikipedia mentions that this frequency is , so it is very very high, so we are not able to view individual points of the sine curve separately with our instruments.

Also it is likely not going to be very useful for many practical applications in this mode.

An I-V curve can also be seen at: Figure "Electron microscope image of a Josephson junction its I-V curve".

I-V curve of the AC Josephson effect

. Source. Voltage is horizontal, current vertical. The vertical bar in the middle is the effect of interest: the current is going up and down very quickly between , the Josephson current of the device. Because it is too quick for the oscilloscope, we just see a solid vertical bar.

Superconducting Transition of Josephson junction by Christina Wicker (2016)

Source. Amazing video that presumably shows the screen of a digital oscilloscope doing a voltage sweep as temperature is reduced and superconductivity is reached. Ackermann function Updated 2025-07-16

To get an intuition for it, see the sample computation at: en.wikipedia.org/w/index.php?title=Ackermann_function&oldid=1170238965#TRS,_based_on_2-ary_function where in this context. From this, we immediately get the intuition that these functions are recursive somehow.

Acousto-optic modulator Updated 2025-07-16

An optical multiplexer!

Abraham Pais Prize for History of Physics Updated 2025-07-16

Action (physics) Updated 2025-07-16

Action role-playing game Updated 2025-07-16

activatedgeek/LeNet-5 Updated 2025-07-16

It trains the LeNet-5 neural network on the MNIST dataset from scratch, and afterwards you can give it newly hand-written digits 0 to 9 and it will hopefully recognize the digit for you.

Ciro Santilli created a small fork of this repo at lenet adding better automation for:

- extracting MNIST images as PNG

- ONNX CLI inference taking any image files as input

- a Python

tkinterGUI that lets you draw and see inference live - running on GPU

Install on Ubuntu 24.10 with:We use our own

sudo apt install protobuf-compiler

git clone https://github.com/activatedgeek/LeNet-5

cd LeNet-5

git checkout 95b55a838f9d90536fd3b303cede12cf8b5da47f

virtualenv -p python3 .venv

. .venv/bin/activate

pip install \

Pillow==6.2.0 \

numpy==1.24.2 \

onnx==1.13.1 \

torch==2.0.0 \

torchvision==0.15.1 \

visdom==0.2.4 \

;pip install because their requirements.txt uses >= instead of == making it random if things will work or not.On Ubuntu 22.10 it was instead:

pip install

Pillow==6.2.0 \

numpy==1.26.4 \

onnx==1.17.0 torch==2.6.0 \

torchvision==0.21.0 \

visdom==0.2.4 \

;Then run with:This script:

python run.pyIt throws a billion exceptions because we didn't start the Visdom server, but everything works nevertheless, we just don't get a visualization of the training.

The terminal outputs lines such as:

Train - Epoch 1, Batch: 0, Loss: 2.311587

Train - Epoch 1, Batch: 10, Loss: 2.067062

Train - Epoch 1, Batch: 20, Loss: 0.959845

...

Train - Epoch 1, Batch: 230, Loss: 0.071796

Test Avg. Loss: 0.000112, Accuracy: 0.967500

...

Train - Epoch 15, Batch: 230, Loss: 0.010040

Test Avg. Loss: 0.000038, Accuracy: 0.989300One of the benefits of the ONNX output is that we can nicely visualize the neural network on Netron:

Advanced quantum field theory lecture by Tobias Osborne (2017) Lecture 2 Updated 2025-07-16

Bluefors Updated 2025-07-16

activatedgeek/LeNet-5 run on GPU Updated 2025-07-16

By default, the setup runs on CPU only, not GPU, as could be seen by running htop. But by the magic of PyTorch, modifying the program to run on the GPU is trivial:and leads to a faster runtime, with less

cat << EOF | patch

diff --git a/run.py b/run.py

index 104d363..20072d1 100644

--- a/run.py

+++ b/run.py

@@ -24,7 +24,8 @@ data_test = MNIST('./data/mnist',

data_train_loader = DataLoader(data_train, batch_size=256, shuffle=True, num_workers=8)

data_test_loader = DataLoader(data_test, batch_size=1024, num_workers=8)

-net = LeNet5()

+device = 'cuda'

+net = LeNet5().to(device)

criterion = nn.CrossEntropyLoss()

optimizer = optim.Adam(net.parameters(), lr=2e-3)

@@ -43,6 +44,8 @@ def train(epoch):

net.train()

loss_list, batch_list = [], []

for i, (images, labels) in enumerate(data_train_loader):

+ labels = labels.to(device)

+ images = images.to(device)

optimizer.zero_grad()

output = net(images)

@@ -71,6 +74,8 @@ def test():

total_correct = 0

avg_loss = 0.0

for i, (images, labels) in enumerate(data_test_loader):

+ labels = labels.to(device)

+ images = images.to(device)

output = net(images)

avg_loss += criterion(output, labels).sum()

pred = output.detach().max(1)[1]

@@ -84,7 +89,7 @@ def train_and_test(epoch):

train(epoch)

test()

- dummy_input = torch.randn(1, 1, 32, 32, requires_grad=True)

+ dummy_input = torch.randn(1, 1, 32, 32, requires_grad=True).to(device)

torch.onnx.export(net, dummy_input, "lenet.onnx")

onnx_model = onnx.load("lenet.onnx")

EOFuser as now we are spending more time on the GPU than CPU:real 1m27.829s

user 4m37.266s

sys 0m27.562s There are unlisted articles, also show them or only show them.